Chatbots work well enough when the interaction stays simple. A user types a clear question. The system finds a close match. A response comes back. It feels efficient. Predictable, even.

Move that same system to a phone call, and something shifts. Calls move faster. People do not speak the way they type. They hesitate. They interrupt themselves. They change direction halfway through a thought. There is no clean structure to rely on. What looked capable in text starts to feel rigid in conversation.

It is not that the system fails immediately. It drifts. Small misses accumulate. A question repeated. A response slightly off. A pause that lingers a bit too long. That drift is usually where the interaction breaks.

Voice AI starts from a different assumption. That difference becomes visible once the interaction moves off the screen and into a live call.

Why do chatbots struggle to hold real conversations?

It comes down to what they were designed to do.

Most chatbots are built to respond to inputs that are already somewhat structured. Even when powered by newer models, they still rely on patterns that work best when the question is clear and contained. In text, users naturally help the system by simplifying what they say.

On a call, that cooperation disappears. People speak incomplete thoughts. They reference earlier interactions without context. They say things like, “I spoke to someone about this already,” or “the issue from last time is still there,” and expect continuity. A chatbot tries to interpret that literally. Sometimes it is guessed correctly. Often it does not. The problem is not just a misunderstanding. It is how the system reacts after that.

Instead of adjusting, it tends to fall back on a safe response. It repeats a question. It redirects to the menu. It answers something adjacent but not quite right. Each small miss adds friction, which is not enough to end the call immediately, but enough to make the user question whether this is going anywhere. That slow drift is what breaks the interaction, and there is where voice AI comes in.

Why do phone calls expose chatbot limitations so quickly?

A phone call does not give the system much room to recover. In text, delays are tolerated. A few seconds pass; the user assumes the system is processing. On a call, that same delay feels different. Silence is noticeable. It creates doubt almost instantly.

There is also no chance to revise what was said. In text, a user can rephrase, clarify, or try again with more precision. On a call, they move forward. If the system misinterprets something, the conversation continues towards the wrong track. That is where things start to feel disjointed.

Another factor is overlapping. People interrupt each other in natural conversation. They start speaking before the other side finishes. Most chatbots are not built to handle that kind of flow. They expect to turn-taking. When that expectation breaks, so does the rhythm. Even the tone becomes a signal. A slight change in pace or emphasis can indicate frustration. Humans pick up on that without thinking. Chatbots usually do not.

So, the system continues as if nothing has changed, while the caller is already disengaging.

What makes voice AI different in live conversations?

Voice AI systems start with a different assumption. Conversations are not clean, and they are not always logical.

Instead of waiting for a perfectly formed input, they work with fragments. They track how something is said, not just what is said. Pace, pauses, repetition, even slight shifts in tone all become part of the signal. This does not mean they understand everything. It means they have more ways to stay on track.

For example, if a caller circles around a point without stating it directly, a voice system may still pick up the intent. If the caller interrupts, the system adjusts rather than resetting. If the response is unclear, it can ask for clarification without breaking the flow entirely.

Timing plays a role, too. Responses are tuned to feel immediate. Even when processing takes time, the system fills the gap in a way that keeps the conversation moving.

There is also a difference in how failure is handled.

A chatbot often tries to complete the interaction no matter what. A voice AI system is more likely to recognize when it is not the right tool for that moment. It can escalate, hand off, or step back before the caller becomes frustrated enough to leave.

That restraint is part of what makes the interaction feel smoother.

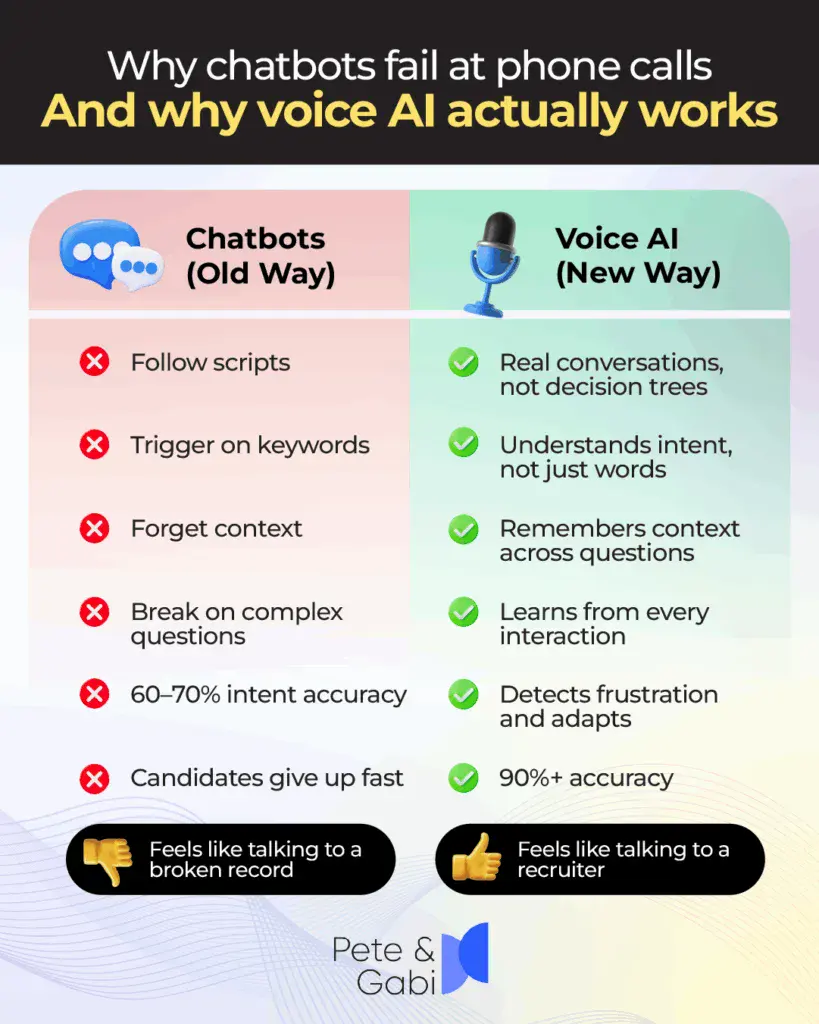

Chatbots vs Voice AI: Key differences in phone-based interactions

When the interaction moves to a call, the distinction becomes clearer.

Chatbots:

- Work best with structured inputs

- Depend on clear, complete queries

- Struggle with interruptions and overlap

- Handle delays poorly in live conversations

- Tend to repeat or redirect when uncertain

Voice AI:

- Handles unstructured, fragmented speech

- Adapts to interruptions and mid-sentence changes

- Responds in real time with minimal perceived delay

- Uses conversational signals beyond words

- Escalates or adjusts when context breaks

This is not to suggest chatbots are ineffective. In structured environments, they perform well. The limitation appears when the interaction becomes fluid.

Do the numbers support the shift toward voice AI?

There is some data, though it should be read carefully. A Zendesk report noted that a significant portion of users found it harder to distinguish between AI and human agents in certain scenarios. Around the same share felt that AI systems could respond with a level of empathy.

Those figures suggest progress, but they do not tell the whole story.

What matters more is what happens during the interaction itself. Fewer abandoned calls. Fewer repeated attempts to solve the same issue. Fewer situations where the user gives up midway.

Those outcomes are harder to measure directly, but they show up in operational metrics over time. Completion rates improve. Handling time becomes more consistent. The system does not need as many retries to reach a resolution.

Want to see our voice AI agents in action? Schedule a demo today.

How does this difference show up in recruiting workflows?

Recruiting is a good place to look because it combines volume, timing, and human judgment. Most hiring pipelines break down early. Not because there are no candidates, but because there is too much to process at once. Applications come quickly. Recruiters cannot respond to all of them immediately. Delays build up.

Candidates notice. A slow first response often means the candidate has already moved on. Not dramatic, just quiet. They accept another offer or stop checking for updates.

This is where a voice-based system changes the flow. Instead of waiting for manual outreach, candidates are contacted soon after applying. Initial screening happens in that same interaction. Scheduling can follow without back-and-forth emails. By the time a recruiter reviews the pipeline, it is already narrowed down.

The recruiter still makes decisions that matter. They just enter the process later, with more context and less noise. That shift reduces the time spent on repetitive tasks without removing the human element.

Where does Voice AI for hiring fit into this?

Rebecca AI is built for that early stage of hiring where most of the delay sits. It handles pre-qualification, initial screening, interview coordination, and scheduling. Candidates move through the first layer of evaluation without requiring constant recruiter involvement.

By the time the recruiter steps in, they are not starting from a blank slate. They have structured information, recorded responses, and a clearer sense of who fits the role. This does not remove uncertainty from hiring. It reduces the amount of manual effort needed to reach a decision point.

What actually changes once this is in place?

Not everything changes, and it is worth being precise about that.

Later stages of hiring remain largely the same. Conversations with finalists, alignment with hiring managers, negotiation, and those still require human involvement.

What changes is the front of the process.

Less time is spent reaching out to candidates one by one. Less time goes into scheduling calls or rescheduling them. Less repetition in asking the same initial questions across dozens of candidates.

Instead, that time shifts toward evaluation and decision-making.

The workflow becomes less fragmented. Fewer interruptions. Fewer delays between steps. The process moves forward in a more continuous way.

That continuity is difficult to create manually at scale. It is where systems like this tend to have the most impact.

Why does this distinction matter more now?

Hiring cycles are not getting simpler.

Application volumes are higher in many roles. Candidate expectations have shifted. Response time, which used to be a minor factor, now shapes how candidates perceive a company early on.

At the same time, recruiters are expected to do more with the same or fewer resources.

That creates pressure at the exact point where chatbots tend to struggle. Early interaction, fast response, and unstructured communication.

Voice AI fits that space more naturally.

Not because it solves everything, but because it aligns better with how those interactions actually happen.

What should teams take away from this?

It is easy to treat chatbots and voice AI as variations of the same idea. In practice, they behave differently enough that the distinction matters.

Text-based systems still have their place. They work well when the interaction is structured and predictable.

Phone calls are not.

If the goal is to handle real conversations at scale, especially in environments like recruiting where timing matters, then the system needs to operate under different assumptions.

Less structure. Less predictability. More variation.

That is where voice AI tends to hold up better.

Not perfect. Just more consistent.

Frequently asked questions:

What is the difference between chatbots and voice AI in recruiting?

Chatbots rely on structured text inputs and work best in predictable workflows. Voice AI handles real-time conversations, including interruptions, incomplete responses, and conversational shifts, which are common in recruiting interactions.

Why do chatbots fail in phone call scenarios?

Chatbots struggle because phone calls involve unstructured, fast-moving conversations. They depend on clear inputs and turn-based interaction, which rarely holds in live calls.

Does voice AI replace recruiters?

No. Voice AI handles early-stage tasks such as outreach and screening. Recruiters remain responsible for evaluation, decision-making, and final interactions.

Where does voice AI work best in hiring?

It tends to perform better in high-volume hiring environments where response time affects candidate engagement and manual workflows create delays.

Is voice AI more accurate than chatbots?

It depends on the context. Voice AI appears to perform better in live conversations, while chatbots remain effective in structured, text-based interactions.

In simple terms, chatbots tend to rely on structure. Voice AI works around the lack of it. That difference, while subtle at first, becomes difficult to ignore once conversations move to a call.